GhostDrift Publishes Lean 4 Formal Proof for ADIC

A Machine-Checkable Foundation for Third-Party AI Assurance

JAPAN, May 15, 2026 /EINPresswire.com/ -- ADIC can turn AI governance claims into replayable evidence, supported by a machine-checkable Lean 4 proof of its replay-verification core. The core soundness theorem is now publicly available for independent execution — no registration required.

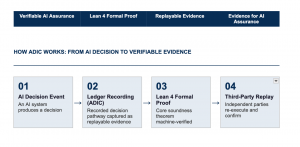

GhostDrift releases a Lean 4 formal proof artifact for ADIC's replay-verification core, showing that when the ADIC verifier accepts a replay certificate, that certificate satisfies the corresponding semantic-validity condition.

In plain terms: AI governance claims can be turned into evidence that a third party can independently replay, check, and challenge. This is not a policy statement or a promise. It is a formally verified property in Lean 4.

Tokyo — GhostDrift Mathematical Institute has published a formally verified proof of the soundness of ADIC's (Advanced Data Integrity by Ledger of Computation) replay-verification core in Lean 4. Proof artifacts are available on GitHub and Zenodo. No registration required.

The core theorem, verifierBool_sound, establishes that if the verifier accepts a certificate, that certificate is semantically valid. This is not an engineering claim or an operational policy. It is a formally verified theorem — one that anyone who downloads the proof artifact can independently check.

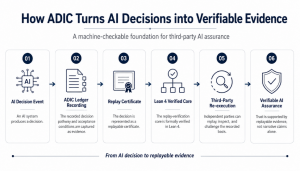

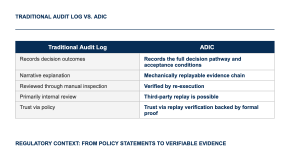

ADIC records not only what an AI system decided, but also the full decision pathway and acceptance conditions, in a form that third parties can mechanically replay and confirm without relying solely on the original operator's account.

Global AI regulation and assurance frameworks are increasingly emphasizing evidence, documentation, risk management, transparency, and accountability beyond policy statements alone. The EU AI Act emphasizes technical documentation and conformity assessment for high-risk AI systems. NIST AI RMF structures AI risk management around Govern, Map, Measure, and Manage. The UK's AI assurance policy emphasizes measuring, evaluating, and communicating the trustworthiness of AI systems, including the role of reliable evidence and independent assurance.

ADIC builds on this direction by recording AI decisions as replayable certificates and by formally verifying the replay-verification core in Lean 4. It helps move AI assurance from evidence that is merely reviewed toward evidence that can be independently re-executed.

STATEMENT

"Monitoring AI and narrating it is not the same as proving it. What ADIC implements is re-verification accountability — the ability for regulators, auditors, counterparties, or dispute resolution bodies to retrace an AI's decision pathway, verify its basis, or challenge it. The Lean 4 formal proof we publish demonstrates that the replay-verification core is not merely a roadmap. It is available now for independent inspection."

— Hidemitsu Maeki, CEO, GhostDrift Mathematical Institute, Inc.

FIRST IMPLEMENTATION TARGET: PHARMACEUTICAL LOGISTICS COLD CHAIN

Pharmaceutical cold-chain logistics is the environment where the problem ADIC addresses is most sharply defined. That is why it is the first implementation target. In this high-responsibility setting, decisions cross multiple organizations and must remain auditable after the fact — shippers, logistics providers, warehouses, and downstream counterparties must be able to verify who accepted which condition, under what criteria, and with what evidence.

In April 2026, GhostDrift entered a strategic partnership with ONZALINX Co., Ltd., an ISO 27001-certified logistics technology company that has provided AI- and mathematical-optimization-based solutions for real-world logistics operations.

The ongoing PoC tests whether ADIC's replayable evidence architecture can support the level of auditability required in multi-party, regulated logistics operations. If validated in pharmaceutical logistics, the architecture could serve as a foundation for deployment in other high-responsibility domains with similar structures.

Additional target sectors: financial decision-making, public-sector AI.

Verify the Proof

The Lean 4 proof artifact is public and independently re-executable.

[Run it yourself: GitHub Repository]

[Technical partnerships and PoC inquiries: Contact]

GLOSSARY

ADIC — Advanced Data Integrity by Ledger of Computation. Technology that records AI decision pathways as replayable certificates, enabling mechanical re-execution by independent third parties.

Lean 4 — A theorem proving system for mechanically verifying mathematical theorems and software properties. Proofs written in Lean 4 can be strictly checked by computer.

AI Assurance — A state or mechanism by which the behavior of an AI system can be confirmed through independently verifiable evidence, as distinct from governance policies or narrative audits alone.

About GhostDrift Mathematical Institute

GhostDrift Mathematical Institute develops AI assurance infrastructure for high-responsibility domains. Its core product, ADIC, connects AI governance claims to reproducible evidence, independent verification, and formal proof. Founded February 2026. 4-4-4 Kita-shinjuku, Shinjuku-ku, Tokyo.

GLOBAL

Global PR Wire

3-6427-1627

email us here

Legal Disclaimer:

EIN Presswire provides this news content "as is" without warranty of any kind. We do not accept any responsibility or liability for the accuracy, content, images, videos, licenses, completeness, legality, or reliability of the information contained in this article. If you have any complaints or copyright issues related to this article, kindly contact the author above.